Most AI initiatives do not fail because of technology.

They fail because teams try to execute them like normal software projects.

That is the core problem Week 2 addresses.

If you understand how AI programs actually evolve, your execution decisions become clearer. If you do not, you will keep pushing things to production that are not ready.

The Illusion of “Let Us Just Build It”

A common situation.

A business leader says,

“Let us build an AI feature for this.”

The team gets excited. Engineering starts exploring. A model is picked. Work begins.

And then after a few weeks:

- Results are inconsistent

- Stakeholders are unsure about value

- No one knows if this should go to production

This happens because the team skipped something critical.

They skipped the lifecycle thinking.

AI Programs Do Not Start with Development

In traditional software, you can start with requirements and move forward.

In AI programs, that approach breaks.

Because before building anything, you need to answer:

- Is this problem even solvable using AI?

- Do we have enough data?

- Will the output be useful in real scenarios?

That is why AI programs follow a different path.

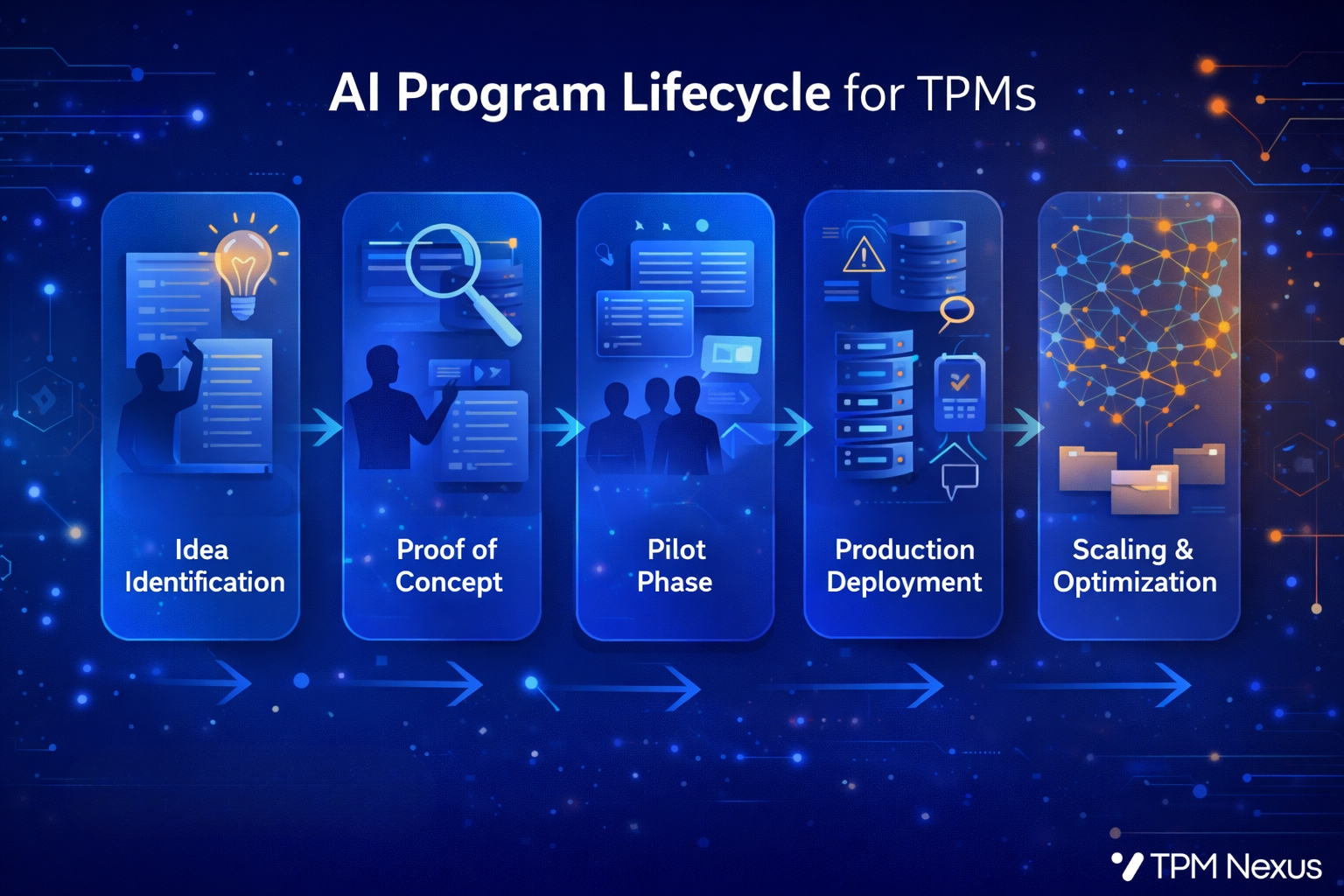

The Real AI Program Lifecycle

Let us simplify the lifecycle into five stages:

1. Idea Identification

This is where everything starts.

But this is not just brainstorming features.

A strong TPM ensures:

- The problem is clear

- There is real business value

- AI is actually needed

Example

Instead of saying:

“Let us build an AI chatbot”

Ask:

“Can we reduce support tickets by 30 percent using automation?”

That changes the direction completely.

2. Proof of Concept (PoC)

This is where most TPMs make mistakes.

PoC is not about building a polished product.

It is about answering one question:

“Can this work at all?”

Example

- Take a small dataset

- Try a basic model

- Validate output quality

If it works 60 to 70 percent of the time, that is already useful insight.

The goal is learning, not perfection.

3. Pilot Phase

Now you test in a controlled real environment.

This is where things become real.

What changes here:

- Real users interact with the system

- Edge cases start appearing

- Feedback becomes critical

Example

Deploy the chatbot to only 10 percent of users.

Now observe:

- Where it fails

- What users actually ask

- How they react to wrong responses

This phase tells you whether the idea is practical.

4. Production Deployment

Only after learning from the pilot should you move here.

This is where many teams rush and regret later.

At this stage, a TPM ensures:

- Clear success metrics are defined

- Monitoring is in place

- Fallback mechanisms exist

Example

If the chatbot fails, route to a human agent.

Do not leave users stuck.

5. Scaling and Optimization

AI systems are never “done”.

They improve over time.

Focus areas:

- Better data

- Model improvements

- Continuous feedback loops

Example

Use past conversations to retrain and improve accuracy.

Real Scenario. Where Teams Go Wrong

Let us take a real-world example.

A company wants to automate resume screening using AI.

What they do:

- Build a model

- Integrate into system

- Push to production

What happens:

- Model rejects good candidates

- Bias issues appear

- Hiring team loses trust

Why?

Because they skipped:

- Proper PoC validation

- Controlled pilot

- Stakeholder alignment

A TPM’s role is to prevent this.

The Stakeholder Complexity in AI Programs

AI programs are not just engineering-driven.

They involve multiple stakeholders:

- Business teams. Define value and outcomes

- Engineering teams. Build systems and integrations

- Data teams. Prepare and manage data

- AI or ML teams. Build and tune models

And often:

- Legal or compliance

- Operations teams

Why This Matters for TPMs

In traditional programs, coordination is mostly within engineering and product.

In AI programs, your job expands:

- Align expectations across different teams

- Translate between business goals and model capabilities

- Manage dependencies that are not always visible

Example

Business says: “We need 95 percent accuracy”

AI team says: “With current data, 80 percent is realistic”

Now you step in:

- Define acceptable thresholds

- Align on trade-offs

- Set realistic expectations

Common Mistakes TPMs Make in AI Lifecycle

- Starting with solution instead of problem

- Skipping PoC and jumping to build

- Treating pilot like production

- Ignoring stakeholder alignment

- Not defining success criteria early

These mistakes do not look big initially.

But they create major failures later.

What You Should Start Doing Now

Take any AI idea and walk it through this lifecycle:

- What is the real problem?

- Can we validate it through a PoC?

- How will we test it in a pilot?

- What defines success in production?

If you can answer these clearly, your execution maturity is already ahead of most teams.

Final Thought

AI programs are not about building faster.

They are about learning faster before committing to scale.

And that is what separates a good TPM from someone just managing timelines.

Want to Go Deeper?

If you want to learn how to run real AI programs from idea to production with clarity,

Join the TPM GenAI Cohort Program: www.tpmnexus.pro