Everyone is still debating

Waterfall vs Agile vs Hybrid.

However, that debate is already outdated.

AI does not care which framework you follow.

Why AI Programs Fail in Real Execution

Let us start with a real situation.

A team spends weeks planning an AI feature.

- Roadmap is clean

- Dependencies are mapped

- Execution looks structured

Everything seems right.

But then AI enters the workflow.

On day one in production:

- Outputs are inconsistent

- Edge cases behave differently

- Prompts need constant tuning

- What worked yesterday does not work today

So now ask yourself.

Where exactly does your “perfect methodology” fit here?

Why Waterfall Breaks Early

Waterfall depends on one assumption.

You can define everything upfront.

But in AI systems, that assumption does not hold.

You do not fully understand behavior until you test it. In addition, edge cases only appear with real usage.

As a result, plans become outdated very quickly.

The more you try to lock things early, the faster your execution loses relevance.

Why Agile Feels Right but Still Fails

Agile introduces flexibility. That part helps.

You iterate. You test. You refine.

However, most teams slowly drift into something else.

Iteration turns into randomness.

- Try a prompt

- Adjust wording

- Test again

At first, this looks like progress. But over time, intent disappears.

Instead of structured iteration, teams start guessing.

And guessing at scale becomes expensive.

The Trap of Vibe Coding

Let us be honest.

We have all done this.

Try something. Adjust it. Try again.

It feels fast and productive.

But then someone asks a simple question.

“Can we rely on this in production?”

That is where everything breaks.

Because now you face:

- No consistency

- No repeatability

- No system

At this point, you do not have execution.

You have experimentation.

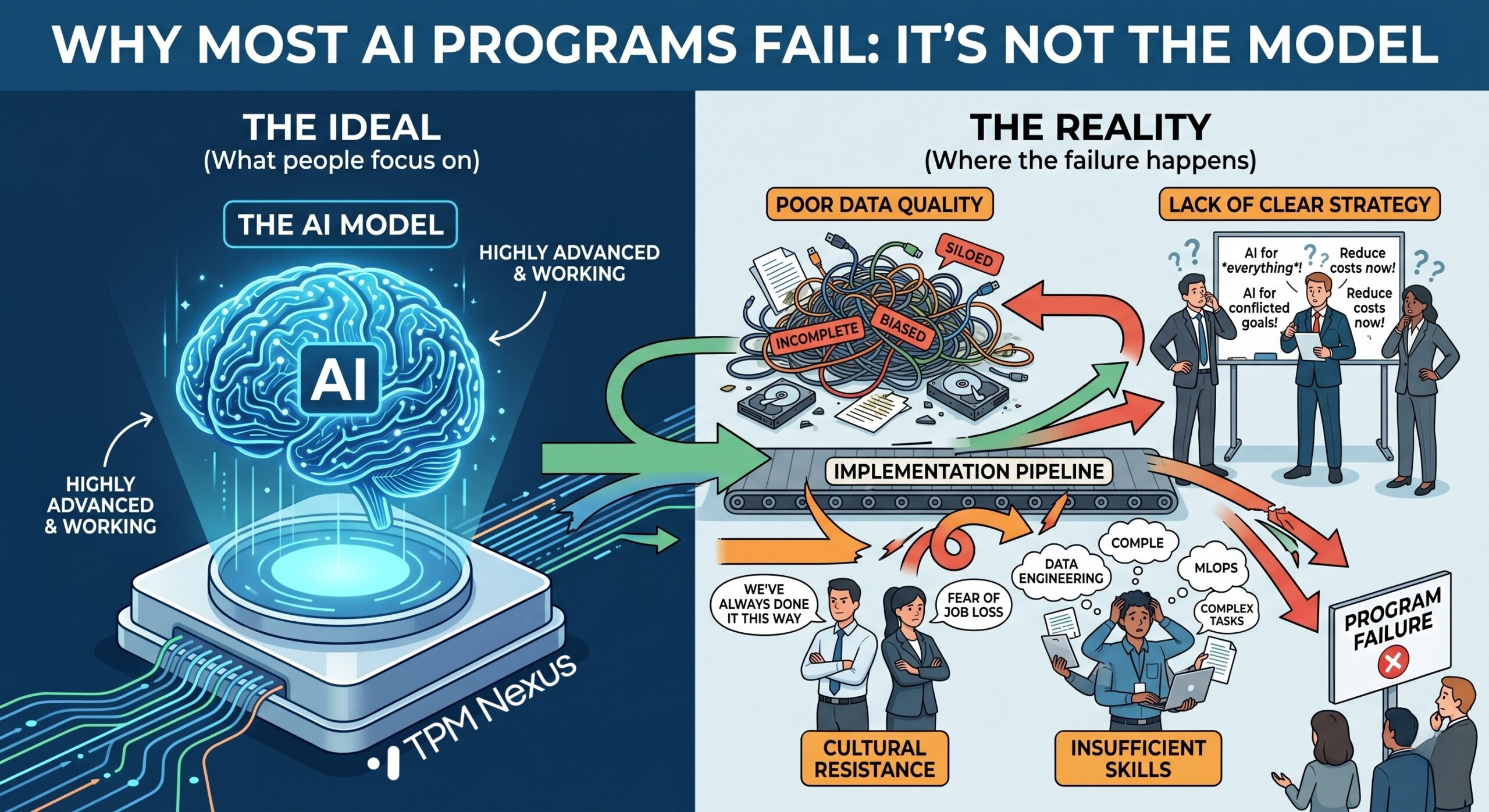

The Real Reason Why AI Programs Fail

Most people assume the issue is the model.

That is not true.

Why AI programs fail is because there is no execution system.

What AI Changes Fundamentally

In traditional systems, outputs are controlled through logic.

In AI systems, control shifts.

You no longer control outputs directly. Instead, you control:

- The workflow around it

- The boundaries within which it operates

- How outputs are validated

- How failures are handled

If these elements are missing, the system becomes unreliable.

In other words, you are not running a system. You are depending on AI.

Where Most Teams Go Wrong

Teams often focus on visible aspects:

- Which model to use

- Prompt engineering tricks

- Cost per token

Meanwhile, critical elements are ignored:

- End-to-end workflow design

- Output validation mechanisms

- Failure handling strategy

- Execution consistency

Because of this, teams build demos.

Not systems.

A Real Scenario Where It Breaks

Consider a support automation use case.

The goal is simple. Automate responses using AI.

During testing:

- The system performs well

- Responses look correct

However, in production:

- Users ask unexpected questions

- Data inconsistencies appear

- Responses vary in quality

As a result:

- Trust drops

- Escalations increase

- The system is partially rolled back

The model did not fail.

The execution design did.

Execution Design Is the Real Work

Execution design is not about planning more.

It is about defining control.

You need clarity on:

- Where AI fits in the system

- What happens when it fails

- How outputs are validated

- How consistency is enforced

Only then does the system become stable.

What Actually Scales

The teams that succeed think differently.

They treat AI as a system problem, not a model problem.

Therefore, they focus on:

- Workflow design instead of features

- Validation layers instead of assumptions

- Feedback loops instead of one-time releases

Because of this approach, their systems improve over time.

Final Thought

Frameworks are not the issue.

Models are not the issue.

Execution design is the difference.

If you treat AI like traditional software, problems will keep appearing.

However, if you design systems around AI behavior, those problems can be managed before they scale.

That is what separates experimentation from real execution.

If you want to move from experiments to real AI execution,

www.tpmnexus.pro